There are few instances when the data you have are exactly what you need, particularly for feeding into analytic tools of various kinds. This is one of the reasons I think it’s so important for a significant subset of digital humanists to have some basic programmings skills; you don’t need to be building tools, sometimes you’re you’re just building data. Otherwise, not only are you dependent on the tools built by others, but you’re also dependent on the data provided by others.

There are few instances when the data you have are exactly what you need, particularly for feeding into analytic tools of various kinds. This is one of the reasons I think it’s so important for a significant subset of digital humanists to have some basic programmings skills; you don’t need to be building tools, sometimes you’re you’re just building data. Otherwise, not only are you dependent on the tools built by others, but you’re also dependent on the data provided by others.

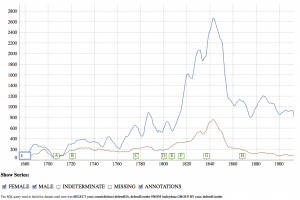

Recently we’ve been working on better integration of Voyeur Tools with the Old Bailey archives. Currently we have a cool prototype that allows you to do an Old Bailey query and to save the results as a Zotero entry and/or send the document directly to Voyeur Tools. The URL that is sent to Voyeur contains parameters that cause Voyeur to go fetch the individual trial accounts from Old Bailey via its experimental API. That’s great except that going to fetch all of those documents adds considerably latency to the system, which for larger collection can cause network timeouts. It would be preferable to have a local document store in Voyeur Tools. Read the rest of this entry »